title: README

emoji: 🐠

colorFrom: pink

colorTo: green

sdk: static

pinned: false

Introducing Lamini, the LLM Engine for Rapid Customization

- Lamini gives every developer the superpowers that took the world from GPT-3 to ChatGPT!

- Today, you can try out our open dataset generator for training instruction-following LLMs (like ChatGPT) on Github.

- Sign up for early access to our full LLM training module, including enterprise features like cloud prem deployments.

- Playground try out our first CC-BY licensed, instruction fine-tuned model: https://huggingface.co/spaces/lamini/instruct-playground

Training LLMs should be as easy as prompt-tuning 🦾

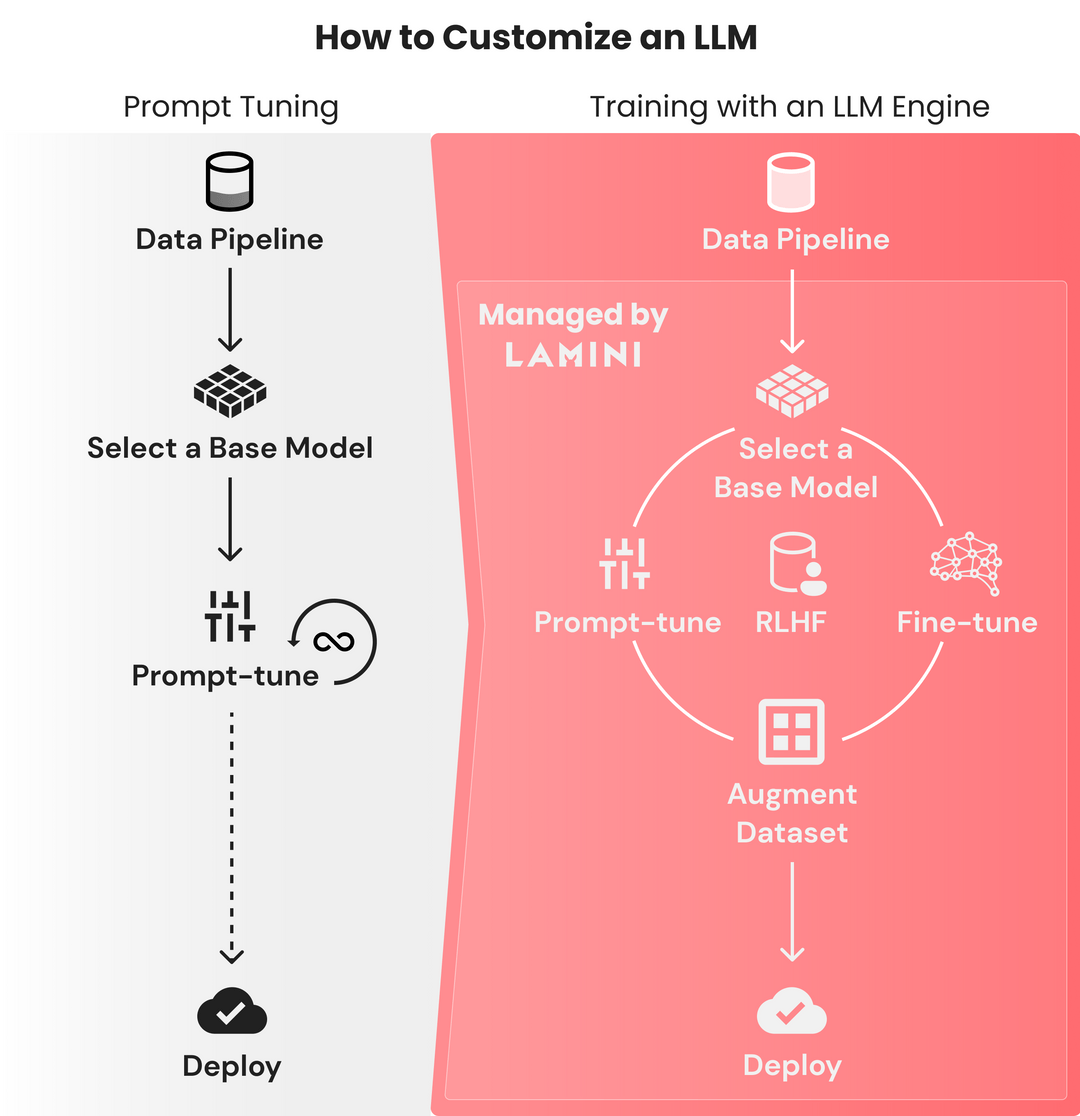

Why is writing a prompt so easy, but training an LLM from a base model still so hard? Iteration cycles for finetuning on modest datasets are measured in months because it takes significant time to figure out why finetuned models fail. Conversely, prompt-tuning iterations are on the order of seconds, but performance plateaus in a matter of hours. Only a limited amount of data can be crammed into the prompt, not the terabytes of data in a warehouse.

It took OpenAI months with an incredible ML team to fine-tune and run RLHF on their base GPT-3 model that was available for years — creating what became ChatGPT. This training process is only accessible to large ML teams, often with PhDs in AI. Technical leaders at Fortune 500 companies have told us:

- “Our team of 10 machine learning engineers hit the OpenAI finetuning API, but our model got worse — help!”

- “I don’t know how to make the best use of my data — I’ve exhausted all the prompt magic we can summon from tutorials online.” That’s why we’re building Lamini: to give every developer the superpowers that took the world from GPT-3 to ChatGPT.

Rapidly train LLMs to be as good as ChatGPT from any base model 🚀

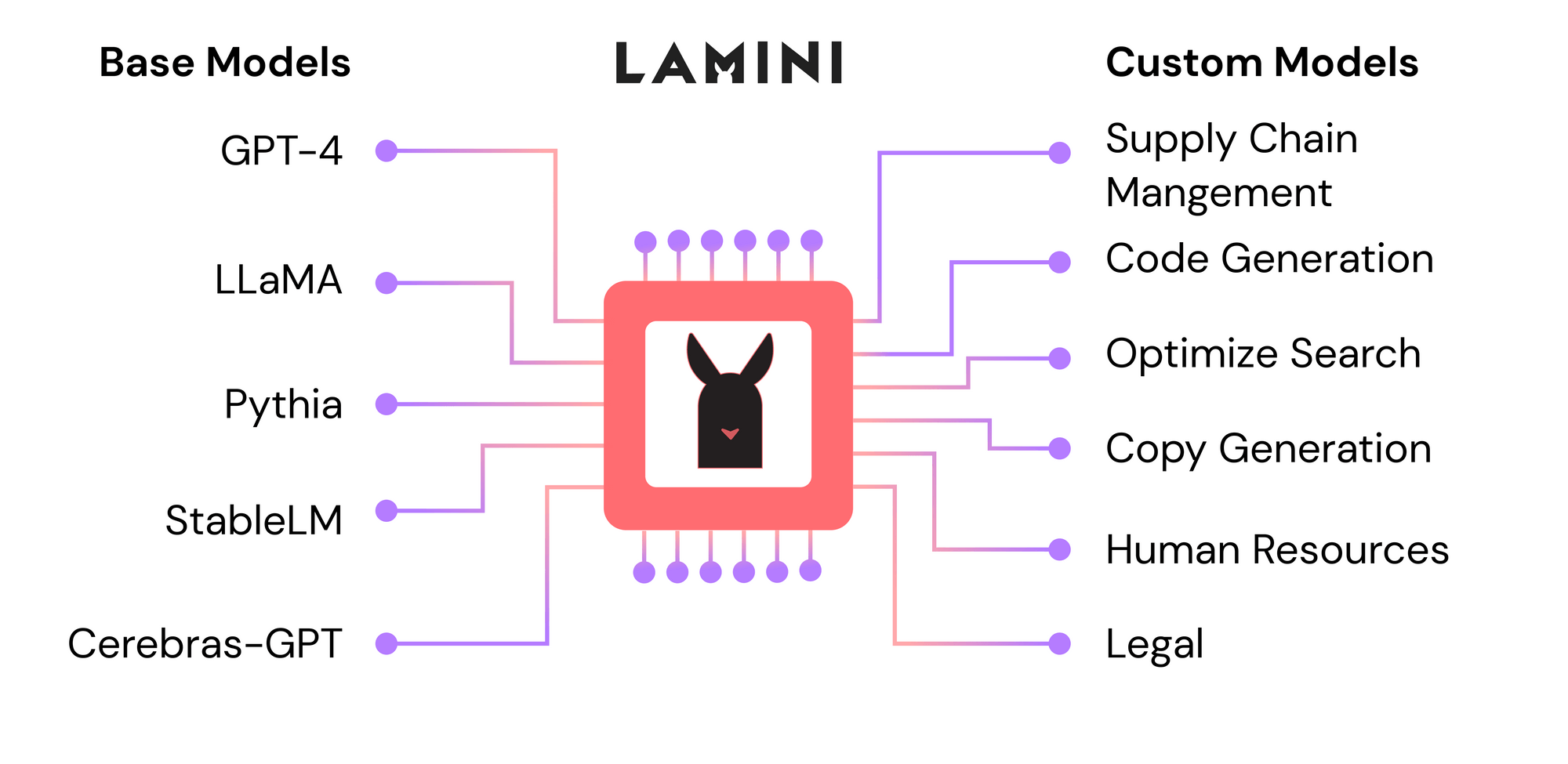

Lamini is an LLM engine that allows any developer, not just machine learning experts, to train high-performing LLMs on large datasets using the Lamini library.

The optimizations in this library reach far beyond what’s available to developers now, from more challenging ones like RLHF to simpler ones like reducing hallucinations.

Lamini runs across platforms, from OpenAI’s models to open-source ones on HuggingFace, with more to come soon. We are agnostic to base models, as long as there’s a way for our engine to train and run them. In fact, Lamini makes it easy to run multiple base model comparisons in just a single line of code.

Now that you know a bit about where we’re going, today, we’re excited to release our first major community resource!

Available now: a hosted data generator for LLM training 🎉

Steps to a ChatGPT-like LLM for your use case 1️⃣2️⃣3️⃣ Here are the steps to get an instruction-following LLM like ChatGPT to handle your use case:

(Show me the code: Play with our dataset generator for creating ChatGPT-like datasets.)

- Try prompt-tuning ChatGPT or another model. You can use Lamini library’s APIs to quickly prompt-tune across different models, swapping between OpenAI and open-source models in just one line of code. We optimize the right prompt for you, so you can take advantage of different models without worrying about the right prompt template for each model.

- Build a large dataset of input-output pairs. These will show your model how it should respond to its inputs, whether that's following instructions given in English, or responding in JSON. Today, we’re releasing a repo with just a few lines of code using the Lamini library to generate 50k data points from as few as 100 data points. We include an open-source 50k dataset in the repo. (More details below on how you can do this!)

- Finetune a base model on your large dataset. Alongside the dataset generator, we’re also releasing an LLM that is finetuned on the generated data using Lamini. You can also hit OpenAI’s finetuning API as a great starting point.

- Run RLHF on your finetuned model. You’ll need an ML team and human labeling team to do this today.

- Deploy to your cloud, by simply hitting the API endpoint in your product or feature.

Our goal is for Lamini to handle this entire process, and we’re actively building steps 2-4 (sign up for early access!).

Step #1: A ChatGPT-like dataset generator 🎉

ChatGPT took the world by storm because it could follow instructions from the user, while the base model that it was trained from (GPT-3) couldn’t do that consistently. For example, if you asked the base model a question, it might generate another question instead of answering it. 🤔

For your application, you'll probably want similar "instruction-following" data, but you might want something completely different, like responding only in JSON.

You'll need a dataset of ~50k instruction-following examples to start. Don't panic. You can now use Lamini’s dataset generator on Github to turn as few as 100 examples into as many as 50k in just a few lines of code.

You can customize the initial 100+ instructions so that the LLM follows instructions in your own vertical. Once you have those, submit them to the Lamini dataset generator, and voilà: you get a large instruction-following dataset on your use case as a result!

How the dataset generator works

The Lamini dataset generator is a pipeline of LLMs that takes your original small set of 100+ instructions, paired with the expected responses, to generate 50k+ new pairs, inspired by Stanford Alpaca. This generation pipeline uses the Lamini library to define and call LLMs to generate different, yet similar, pairs of instructions and responses. Trained on this data, your LLM will improve to follow these instructions.

We provide a good default for the generation pipeline that uses open-source LLMs, which we call Lamini Open and Lamini Instruct. With new LLMs being released each day, we update the defaults to the best-performing models. As of this release, we are using XX for Lamini Open and YY for Lamini Instruct. Lamini Open generates more instructions, and Lamini Instruct generates paired responses to those instructions. The final generated dataset is available for your free commercial use (CC-BY license).

The Lamini library allows you to swap our defaults for other open-source or OpenAI models in just one line of code. Note that while we find OpenAI models to perform better on average, their license restricts commercial use of generated data for training models similar to ChatGPT.

If you’re interested in more details on how our dataset generator works, read more or run it here.

We Fine-tuned a custom model and hosted it 🎉

We have used the above pipeline to generate a filtered dataset having around 37k questions and responses samples. But that's not all! We've also fine-tuned a language model based on EleutherAI’s pythia model. It is hosted on Hugging-Face website as lamini/instruct-tuned-2.8b and is available for use under CC-BY license here. This model is optimized for generating accurate and relevant responses to instruction-based tasks, making it perfect for tasks like question answering, code autocomplete, and chatbots. Feel free to run queries by yourself on our playground!!

Pushing the boundaries of fast & usable generative AI

We’re excited to dramatically improve the performance of training LLMs and expand who is able to train them. These two frontiers are intertwined: with faster, more effective iteration cycles more people will be able to build these models, beyond just fiddling with prompts.

At Lamini, our mission is to help all software engineers build and ship their own production-grade large language models. Lamini is the world's most powerful LLM engine, unlocking the power of generative AI for every company by putting their data to work. The future of software is powered by LLMs, driven forward by data and compute scaling laws.