Upload folder using huggingface_hub

#2

by

sharpenb

- opened

- README.md +39 -16

- config.json +1 -1

- model → model/optimized_model.pkl +2 -2

- model/smash_config.json +3 -0

- plots.png +0 -0

README.md

CHANGED

|

@@ -21,29 +21,52 @@ metrics:

|

|

| 21 |

|

| 22 |

# Simply make AI models cheaper, smaller, faster, and greener!

|

| 23 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 24 |

## Results

|

| 25 |

|

| 26 |

|

| 27 |

|

|

|

|

|

|

|

| 28 |

## Setup

|

| 29 |

|

| 30 |

-

You can run the smashed model

|

| 31 |

-

|

| 32 |

-

|

| 33 |

```bash

|

| 34 |

-

|

| 35 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 36 |

```

|

| 37 |

-

Alternatively, you can download them manually.

|

| 38 |

-

3. Loading the model.

|

| 39 |

-

4. Running the model.

|

| 40 |

-

You can achieve this by running the following code:

|

| 41 |

-

```python

|

| 42 |

-

from pruna_engine.PrunaModel import PrunaModel # Step (1): install and import `pruna-engine` package.

|

| 43 |

-

model_path = "runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed/model" # Step (2): specify the downloaded model path.

|

| 44 |

-

smashed_model = PrunaModel.load_model(model_path) # Step (3): load the model.

|

| 45 |

-

y = smashed_model(prompt="a silly prune with a face in high definition", image_height=512, image_width=512)[0] # Step (4): run the model.

|

| 46 |

-

```

|

| 47 |

|

| 48 |

## Configurations

|

| 49 |

|

|

@@ -51,7 +74,7 @@ The configuration info are in `config.json`.

|

|

| 51 |

|

| 52 |

## License

|

| 53 |

|

| 54 |

-

We follow the same license as the original model. Please check the license of the original model before using this model.

|

| 55 |

|

| 56 |

## Want to compress other models?

|

| 57 |

|

|

|

|

| 21 |

|

| 22 |

# Simply make AI models cheaper, smaller, faster, and greener!

|

| 23 |

|

| 24 |

+

[](https://twitter.com/PrunaAI)

|

| 25 |

+

[](https://github.com/PrunaAI)

|

| 26 |

+

[](https://www.linkedin.com/company/93832878/admin/feed/posts/?feedType=following)

|

| 27 |

+

|

| 28 |

+

- Give a thumbs up if you like this model!

|

| 29 |

+

- Contact us and tell us which model to compress next [here](https://www.pruna.ai/contact).

|

| 30 |

+

- Request access to easily compress your *own* AI models [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

| 31 |

+

- Read the documentations to know more [here](https://pruna-ai-pruna.readthedocs-hosted.com/en/latest/)

|

| 32 |

+

- Share feedback and suggestions on the Slack of Pruna AI (Coming soon!).

|

| 33 |

+

|

| 34 |

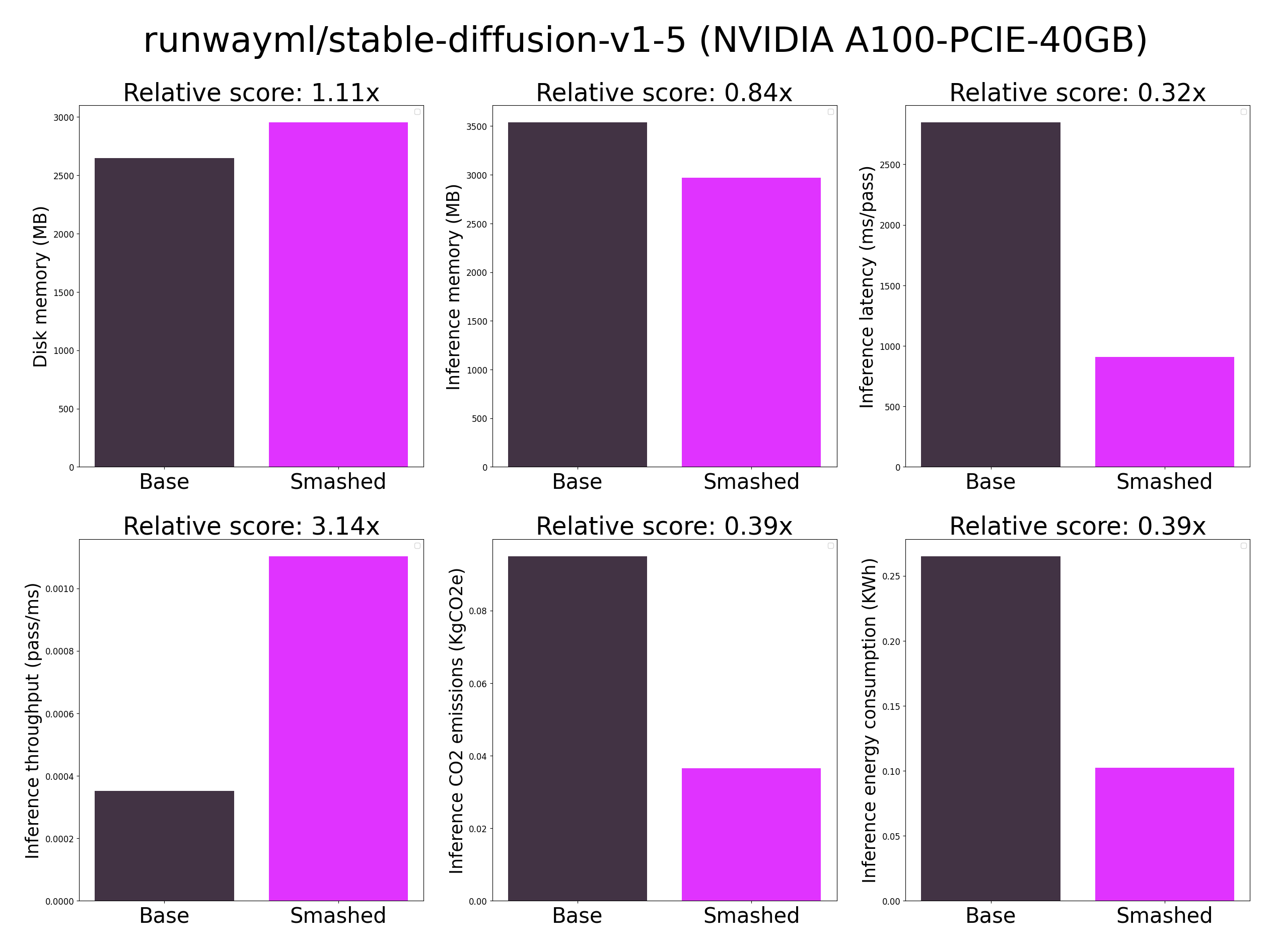

## Results

|

| 35 |

|

| 36 |

|

| 37 |

|

| 38 |

+

These results were obtained on NVIDIA A100-PCIE-40GB with configuration described in config.json. Results may vary in other settings (e.g. other hardware, image size, batch size, ...).

|

| 39 |

+

|

| 40 |

## Setup

|

| 41 |

|

| 42 |

+

You can run the smashed model with these steps:

|

| 43 |

+

0. Check that you have cuda installed. You can do this by running `nvcc --version` or `conda install nvidia/label/cuda-12.1.0::cuda`.

|

| 44 |

+

1. Install the `pruna-engine` available [here](https://pypi.org/project/pruna-engine/) on Pypi. It might take 15 minutes to install.

|

| 45 |

```bash

|

| 46 |

+

pip install pruna-engine[gpu] --extra-index-url https://pypi.nvidia.com --extra-index-url https://pypi.ngc.nvidia.com

|

| 47 |

+

```

|

| 48 |

+

3. Download the model files using one of these three options.

|

| 49 |

+

- Option 1 - Use command line interface (CLI):

|

| 50 |

+

```bash

|

| 51 |

+

mkdir runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed

|

| 52 |

+

huggingface-cli download PrunaAI/runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed --local-dir runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed --local-dir-use-symlinks False

|

| 53 |

+

```

|

| 54 |

+

- Option 2 - Use Python:

|

| 55 |

+

```python

|

| 56 |

+

import subprocess

|

| 57 |

+

repo_name = "runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed"

|

| 58 |

+

subprocess.run(["mkdir", repo_name])

|

| 59 |

+

subprocess.run(["huggingface-cli", "download", 'PrunaAI/'+ repo_name, "--local-dir", repo_name, "--local-dir-use-symlinks", "False"])

|

| 60 |

+

```

|

| 61 |

+

- Option 3 - Download them manually on the HuggingFace model page.

|

| 62 |

+

3. Load & run the model.

|

| 63 |

+

```python

|

| 64 |

+

from pruna_engine.PrunaModel import PrunaModel

|

| 65 |

+

|

| 66 |

+

model_path = "PrunaAI/runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed/model" # Specify the downloaded model path.

|

| 67 |

+

smashed_model = PrunaModel.load_model(model_path) # Load the model.

|

| 68 |

+

y = smashed_model(x) # Run the model where x is the expected input of.

|

| 69 |

```

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 70 |

|

| 71 |

## Configurations

|

| 72 |

|

|

|

|

| 74 |

|

| 75 |

## License

|

| 76 |

|

| 77 |

+

We follow the same license as the original model. Please check the license of the original model ORIGINAL_runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed before using this model.

|

| 78 |

|

| 79 |

## Want to compress other models?

|

| 80 |

|

config.json

CHANGED

|

@@ -1 +1 @@

|

|

| 1 |

-

{"

|

|

|

|

| 1 |

+

{"pruners": "None", "pruning_ratio": 0.0, "factorizers": "None", "quantizers": "None", "n_quantization_bits": 32, "output_deviation": 0.01, "compilers": "['diffusers2']", "static_batch": true, "static_shape": false, "controlnet": "None", "unet_dim": 4, "device": "cuda", "save_dir": "/ceph/hdd/staff/charpent/models/.models/optimized_model", "batch_size": 1, "max_batch_size": 1, "image_height": 512, "image_width": 512, "version": "1.5", "task": "txt2img", "model_name": "runwayml/stable-diffusion-v1-5", "weight_name": "None", "save_load_fn": "stable_fast"}

|

model → model/optimized_model.pkl

RENAMED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:32f59a4e05e4485e7bb0f5ff093fca7256dc823c5db956cbbbeeba43f15ca2e1

|

| 3 |

+

size 2743389214

|

model/smash_config.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8465e720e5d0db8d543ba6f1cc3bef9e7dd075cb900b907891fcd54a4a97ccaa

|

| 3 |

+

size 742

|

plots.png

CHANGED

|

|